---

title: |

All BANG, little buck: Need-related experiences are weakly linked with behavior in the video game domain

author:

- name: Nick Ballou

corresponding: true

orcid: 0000-0003-4126-0696

email: nick@nickballou.com

affiliations:

- ref: 1

- ref: 2

roles:

- conceptualization

- data curation

- investigation

- methodology

- formal analysis

- visualization

- writing

- editing

- name: Tamás Andrei Földes

corresponding: false

orcid: 0000-0002-0623-9149

email: tamas.foldes@oii.ox.ac.uk

affiliations:

- ref: 2

roles:

- data curation

- methodology

- validation

- editing

- name: Matti Vuorre

corresponding: false

orcid: 0000-0001-5052-066X

email: mjvuorre@uvt.nl

affiliations:

- ref: 3

roles:

- methodology

- funding acquisition

- editing

- name: Thomas Hakman

corresponding: false

orcid: 0009-0009-8292-2482

email: thomas.hakman@oii.ox.ac.uk

affiliations:

- ref: 2

roles:

- investigation

- data curation

- editing

- name: Kristoffer Magnusson

corresponding: false

orcid: 0000-0003-0713-0556

affiliations:

- ref: 4

- ref: 2

roles:

- software

- editing

- name: Andrew K Przybylski

corresponding: true

email: andy.przybylski@oii.ox.ac.uk

orcid: 0000-0001-5547-2185

affiliations:

- ref: 2

roles:

- conceptualization

- funding acquisition

- project administration

- editing

affiliations:

- id: 1

name: Imperial College London

department: Dyson School of Design Engineering

- id: 2

name: University of Oxford

department: Oxford Internet Institute

- id: 3

name: Tilburg University

department: Department of Social Psychology

- id: 4

name: Karolinska Institute

csl: https://www.zotero.org/styles/apa

bibliography: references.bib

keywords: [motivation, digital trace data, video games, self-determination theory, displacement, compensatory behavior]

editor_options:

chunk_output_type: console

markdown:

wrap: none

knitr:

opts_chunk:

cache: true

warning: false

message: false

fig-width: 6

fig-asp: 0.618

out-width: 80%

fig-align: center

code-links: repo

format:

html:

theme:

light: united

dark: darkly

toc: true

toc-depth: 2

toc-location: right

number-sections: true

fontsize: 13pt

code-fold: true

code-summary: "Show code"

code-tools: true

echo: true

embed-resources: false

page-layout: article

grid:

body-width: 900px

margin-width: 250px

other-links:

- text: Stage 1 Registered Report

href: https://osf.io/pb5nu

icon: file-pdf

- text: Data

href: https://zenodo.org/records/17609160

icon: link

preprint-typst:

echo: false

wordcount: true

theme: jou

citeproc: true

mainfont: "Liberation Serif"

sansfont: "Liberation Sans"

include-before-body:

- text: "#show <refs>: set par(hanging-indent: 1.4em)"

docx:

echo: false

---

```{r}

#| label: libraries

#| code-summary: "Show code (Load required R packages)"

#| cache: false

library(tidyverse)

library(glue)

library(glmmTMB)

library(lme4)

library(marginaleffects)

library(mice)

library(howManyImputations)

library(future)

library(modelsummary)

library(lubridate)

library(DHARMa)

library(brms)

library(broom.mixed)

library(psych)

library(tinytable)

library(litedown)

library(patchwork)

library(ggridges)

library(ggdist)

library(splines)

library(scales)

library(janitor)

```

```{r}

#| label: setup

#| code-summary: "Show code (Set random seed and global options)"

#| cache: false

set.seed(8675309)

options(scipen = 999, timeout = 600)

theme_set(theme_minimal())

theme_update(

plot.background = element_rect(fill = "white", color = NA),

panel.background = element_rect(fill = "white", color = NA),

strip.background = element_rect(fill = "black"),

strip.text = element_text(color = "white", size = 10),

axis.text.y = element_text(color = "black", size = 10),

axis.text.x = element_text(color = "black", size = 10),

panel.grid.minor = element_blank(),

panel.border = element_rect(

colour = "black",

fill = NA,

linewidth = 1

)

)

# Define consistent color palette for all visualizations

colors <- list(

game_ns = "#009988", # Teal for game need satisfaction

global_ns = "#44BB99", # Light teal for global need satisfaction

global_nf = "#EE6677", # Red for global need frustration

nintendo = "#E60012", # Nintendo red

xbox = "#107C10", # Xbox green

steam = "#215e8a", # Steam dark blue

within = "#228833", # Green for within-person variance

between = "#BBBBBB" # Gray for between-person variance

)

# Define human-readable labels for snake_case column names

labels <- c(

# Base variables

"game_ns" = "Game need satisfaction",

"game_nf" = "Game need frustration",

"global_ns" = "Global need satisfaction",

"global_nf" = "Global need frustration",

"session_length" = "Session length",

"session_gap" = "Time to next session",

# Within-person

"game_ns_cw" = "Game need satisfaction (within)",

"game_nf_cw" = "Game need frustration (within)",

"global_ns_cw" = "Global need satisfaction (within)",

"global_nf_cw" = "Global need frustration (within)",

# Between-person

"game_ns_cb" = "Game need satisfaction (between)",

"game_nf_cb" = "Game need frustration (between)",

"global_ns_cb" = "Global need satisfaction (between)",

"global_nf_cb" = "Global need frustration (between)",

# Within-person (alternate)

"game_ns (within-person)" = "Game need satisfaction (within-person)",

"global_ns (within-person)" = "Global need satisfaction (within-person)",

"global_nf (within-person)" = "Global need frustration (within-person)",

# Between-person (alternate)

"game_ns (between-person)" = "Game need satisfaction (between-person)",

"global_ns (between-person)" = "Global need satisfaction (between-person)",

"global_nf (between-person)" = "Global need frustration (between-person)",

# Interaction

"game_ns_cw:global_nf_cw" = "Game need satisfaction × Global need frustration (within)",

# Displacement

"displaced_core_domain" = "Displaced core domain",

"displaced_core_domainTRUE" = "Displaced core domain",

# Variance components

"Within-person" = "Within-person",

"Between-person" = "Between-person",

# Other

"(Intercept)" = "Intercept"

)

# Load custom functions

source("R/helpers.R")

```

```{r}

#| label: load-data

#| code-summary: "Show code (Download and load raw data from Zenodo)"

# Download data from Zenodo (one-time download, then cached locally)

zenodo_url <- "https://zenodo.org/records/17609160/files/digital-wellbeing/open-play-v1.0.0.zip?download=1"

zip_path <- "data/open-play-v1.0.0.zip"

if (!file.exists(zip_path)) {

dir.create(dirname(zip_path), showWarnings = FALSE, recursive = TRUE)

# Set longer timeout for large file (194MB) - default is 60 seconds

options(timeout = max(600, getOption("timeout")))

message("Downloading 194MB file from Zenodo (may take a few minutes)...")

tryCatch(

{

download.file(zenodo_url, zip_path, mode = "wb", method = "libcurl")

message("Download complete!")

},

error = function(e) {

message("Download failed. Please download manually from:")

message(zenodo_url)

message(glue("And save to: {normalizePath(zip_path, mustWork = FALSE)}"))

stop(e)

}

)

}

# Extract zip if not already extracted

extract_dir <- "data/open-play-v1.0.0"

if (!dir.exists(extract_dir)) {

message("Extracting zip archive...")

unzip(zip_path, exdir = "data")

# Rename the extracted folder to a simpler name

extracted_folder <- list.dirs("data", recursive = FALSE, full.names = TRUE)

extracted_folder <- extracted_folder[grepl(

"digital-wellbeing-open-play",

extracted_folder

)]

if (length(extracted_folder) == 1 && extracted_folder != extract_dir) {

file.rename(extracted_folder, extract_dir)

}

}

# Read files from extracted directory

intake <- read_csv(

file.path(extract_dir, "data/clean/survey_intake.csv.gz"),

guess_max = 10000

)

surveys <- read_csv(file.path(

extract_dir,

"data/clean/survey_daily.csv.gz"

)) |>

filter(pid %in% intake$pid)

xbox <- read_csv(file.path(extract_dir, "data/clean/xbox.csv.gz"))

nintendo <- read_csv(file.path(extract_dir, "data/clean/nintendo.csv.gz"))

steam <- read_csv(

file.path(extract_dir, "data/clean/steam.csv.gz"),

guess_max = 10000

)

# Load helper functions

source(file.path(extract_dir, "R/helpers.R"))

```

```{r}

#| label: prepare-telemetry

#| code-summary: "Show code (Process telemetry into hourly playtime with timezone adjustments)"

# merge with the local time zone offsets from intake

# Note: We keep country and local_timezone for DST-aware conversion

tz_map <- intake |>

mutate(

pid = as.character(pid),

country,

local_timezone,

.keep = "none"

) |>

distinct(pid, .keep_all = TRUE)

# aggregate data at each level of granularity (session, hourly, daily)

# --- 1) SESSION-LEVEL (Nintendo + Xbox) ------------------------------------

sessions_telemetry <- bind_rows(

xbox |> mutate(platform = "Xbox"),

nintendo |> mutate(platform = "Nintendo")

) |>

left_join(tz_map, by = "pid") |>

filter(!is.na(local_timezone)) |>

mutate(

# Calculate DST-aware offset for each timestamp

offset_start = get_dst_offset(session_start, country, local_timezone),

offset_end = get_dst_offset(session_end, country, local_timezone),

# Add offset to get local time values (keeping UTC label for compatibility)

start_local = session_start + offset_start,

end_local = session_end + offset_end,

duration_min = as.numeric(difftime(

session_end,

session_start,

units = "mins"

))

) |>

filter(

!is.na(session_start),

!is.na(session_end),

session_end > session_start,

duration_min >= 1

)

# --- 2) HOURLY (Nintendo + Xbox expanded) ----------------------------------

# expand sessions into local-hour bins, compute overlap minutes, and add UTC hour

hourly_from_sessions <- sessions_telemetry |>

filter(!is.na(start_local), !is.na(end_local)) |>

mutate(

h0_local = floor_date(start_local, "hour"),

h1_local = floor_date(end_local - seconds(1), "hour"),

n_hours = as.integer(difftime(h1_local, h0_local, units = "hours")) + 1

) |>

filter(!is.na(n_hours), n_hours > 0) |>

tidyr::uncount(n_hours, .remove = FALSE, .id = "k") |>

mutate(

hour_start_local = h0_local + hours(k - 1),

minutes = pmax(

0,

as.numeric(difftime(

pmin(end_local, hour_start_local + hours(1)),

pmax(start_local, hour_start_local),

units = "mins"

))

),

# Convert back to UTC (this preserves the instant, just changes label)

hour_start_utc = with_tz(hour_start_local, tzone = "UTC")

) |>

select(pid, platform, title_id, hour_start_local, hour_start_utc, minutes) |>

group_by(pid, platform, title_id, hour_start_local, hour_start_utc) |>

summarise(minutes = sum(minutes, na.rm = TRUE), .groups = "drop")

hourly_from_steam <- steam |>

select(pid, title_id, datetime_hour_start, minutes) |>

mutate(pid = as.character(pid)) |>

left_join(tz_map, by = "pid") |>

filter(!is.na(local_timezone)) |>

mutate(

platform = "Steam",

hour_start_utc = datetime_hour_start,

offset = get_dst_offset(datetime_hour_start, country, local_timezone),

# Note: These are local time values with UTC labels due to R's POSIXct limitation

hour_start_local = datetime_hour_start + offset

) |>

select(pid, platform, title_id, hour_start_local, hour_start_utc, minutes) |>

group_by(pid, platform, title_id, hour_start_local, hour_start_utc) |>

summarise(minutes = sum(minutes, na.rm = TRUE), .groups = "drop")

hourly_telemetry <- bind_rows(hourly_from_sessions, hourly_from_steam)

# --- 3) DAILY (Nintendo + Xbox + Steam; collapse hourly to days) -----------

daily_telemetry <- hourly_telemetry |>

mutate(

day_local = as.Date(hour_start_local),

) |>

group_by(pid, platform, day_local) |>

summarise(minutes = sum(minutes, na.rm = TRUE), .groups = "drop")

# --- 4) weekly

weekly_telemetry <- daily_telemetry |>

mutate(

week = floor_date(day_local, "week")

) |>

group_by(pid, platform, week) |>

summarise(minutes = sum(minutes, na.rm = TRUE), .groups = "drop")

telemetry_spans <- daily_telemetry |>

group_by(pid, platform) |>

summarise(

telemetry_start = min(day_local, na.rm = TRUE),

telemetry_end = max(day_local, na.rm = TRUE) + hours(1), # end of last hour bin

week = floor_date(telemetry_end, "week"),

n_weeks = as.integer(difftime(

telemetry_end,

telemetry_start,

units = "weeks"

)) +

1,

.groups = "drop"

)

```

```{r}

#| label: prepare-survey

#| code-summary: "Show code (Prepare survey data and calculate play windows)"

# Step 1: Prepare data for imputation

# Note: We only impute individual items, not composites (to avoid collinearity)

# Composites will be calculated post-imputation

surveys <- surveys |>

filter(!bpnsfs_failed_att_check) |>

mutate(wave = as.factor(wave))

# Calculate play windows (both 24h and 12h for sensitivity analyses)

play_24h <- surveys |>

mutate(

survey_time = as.POSIXct(date, tz = "UTC"),

window_end = survey_time + hours(24)

) |>

left_join(

hourly_telemetry |> select(pid, hour_start_utc, minutes),

by = join_by(

pid,

y$hour_start_utc >= x$survey_time,

y$hour_start_utc < x$window_end

)

) |>

summarise(

play_minutes_24h = sum(minutes, na.rm = TRUE),

.by = c(pid, survey_time)

)

play_12h <- surveys |>

mutate(

survey_time = as.POSIXct(date, tz = "UTC"),

window_end = survey_time + hours(12)

) |>

left_join(

hourly_telemetry |> select(pid, hour_start_utc, minutes),

by = join_by(

pid,

y$hour_start_utc >= x$survey_time,

y$hour_start_utc < x$window_end

)

) |>

summarise(

play_minutes_12h = sum(minutes, na.rm = TRUE),

.by = c(pid, survey_time)

)

play_6h <- surveys |>

mutate(

survey_time = as.POSIXct(date, tz = "UTC"),

window_end = survey_time + hours(6)

) |>

left_join(

hourly_telemetry |> select(pid, hour_start_utc, minutes),

by = join_by(

pid,

y$hour_start_utc >= x$survey_time,

y$hour_start_utc < x$window_end

)

) |>

summarise(

play_minutes_6h = sum(minutes, na.rm = TRUE),

.by = c(pid, survey_time)

)

play_24h_pre <- surveys |>

mutate(

survey_time = as.POSIXct(date, tz = "UTC"),

window_start = survey_time - hours(24)

) |>

left_join(

hourly_telemetry |> select(pid, hour_start_utc, minutes),

by = join_by(

pid,

y$hour_start_utc >= x$window_start,

y$hour_start_utc < x$survey_time

)

) |>

summarise(

play_minutes_24h_pre = sum(minutes, na.rm = TRUE),

.by = c(pid, survey_time)

)

# Prepare intake auxiliary variables

intake_aux <- intake |>

mutate(

gender = ifelse(gender %in% c("Man", "Woman"), gender, "Non-binary/other"),

# Extract numeric values from WEMWBS response strings

across(wemwbs_1:wemwbs_7, ~ as.numeric(str_extract(.x, "^\\d+"))),

wemwbs = rowMeans(pick(wemwbs_1:wemwbs_7), na.rm = TRUE)

) |>

select(

pid,

age,

gender,

edu_level,

employment,

marital_status,

life_sat_baseline = life_sat,

wemwbs,

diaries_completed,

self_reported_weekly_play

)

# Load pre-classified activity categories

activity_categories <- read_csv("data/activity_categories.csv") |>

mutate(wave = as.factor(wave))

surveys <- surveys |>

mutate(survey_time = as.POSIXct(date, tz = "UTC")) |>

left_join(play_24h, by = c("pid", "survey_time")) |>

left_join(play_12h, by = c("pid", "survey_time")) |>

left_join(play_6h, by = c("pid", "survey_time")) |>

left_join(play_24h_pre, by = c("pid", "survey_time")) |>

left_join(intake_aux, by = "pid") |>

left_join(activity_categories, by = c("pid", "wave")) |>

mutate(

# Rename self-reported play to distinguish from telemetry

self_reported_played_24h = played24hr,

# Telemetry-based play (post-survey windows)

telemetry_played_any_24h = play_minutes_24h > 0,

telemetry_played_any_12h = play_minutes_12h > 0,

telemetry_played_any_6h = play_minutes_6h > 0,

# Telemetry-based play (pre-survey window)

telemetry_played_any_24h_pre = play_minutes_24h_pre > 0

) |>

select(

pid,

wave,

# Auxiliary predictors (not imputed)

date,

age,

gender,

edu_level,

employment,

marital_status,

life_sat_baseline,

wemwbs,

diaries_completed,

self_reported_weekly_play,

# Self-reported play (used to control BANGS imputation)

self_reported_played_24h,

# Variables to impute: individual items only (composites calculated post-imputation)

bpnsfs_1,

bpnsfs_2,

bpnsfs_3,

bpnsfs_4,

bpnsfs_5,

bpnsfs_6,

bangs_1,

bangs_2,

bangs_3,

bangs_4,

bangs_5,

bangs_6,

displaced_core_domain,

# Telemetry (not imputed in mice, but needed for analysis)

telemetry_played_any_24h,

play_minutes_24h,

telemetry_played_any_12h,

play_minutes_12h,

telemetry_played_any_6h,

play_minutes_6h,

telemetry_played_any_24h_pre,

play_minutes_24h_pre

)

```

```{r}

#| label: mice

#| code-summary: "Show code (Run MICE imputation on analytical sample)"

#| results: hide

#| fig-show: hide

#| freeze: false

# Step 2: Multiple imputation in wide format

#

# Main analysis uses analytical sample only (≥15 waves, N~555)

# - Higher quality data → stable FMI estimates

# - Run determine_m_imputations.qmd to calculate required M

# - Update M_IMPUTATIONS to recommended value

#

# Sensitivity analysis (full sample imputation) in separate chunk below

# Define minimum waves threshold for analytical sample

min_waves <- 15

full_eligible_pids <- unique(surveys$pid)

message(glue(

"Imputing analytical sample: {length(unique(surveys$pid[surveys$diaries_completed >= min_waves]))}/{length(full_eligible_pids)} participants with ≥{min_waves} waves"

))

# Separate telemetry (no missing data) from survey variables (to be imputed)

# Filter to analytical sample here without overwriting surveys

telemetry_vars <- surveys |>

filter(diaries_completed >= min_waves) |>

select(

pid,

wave,

date,

telemetry_played_any_24h,

play_minutes_24h,

telemetry_played_any_12h,

play_minutes_12h,

telemetry_played_any_6h,

play_minutes_6h,

telemetry_played_any_24h_pre,

play_minutes_24h_pre

)

# Convert to wide format for imputation

# Auxiliary variables go in id_cols (used as predictors, not imputed)

# Only impute individual items, not composite scores (to avoid collinearity)

surveys_wide <- surveys |>

filter(diaries_completed >= min_waves) |>

select(

pid,

wave,

age,

gender,

edu_level,

employment,

marital_status,

life_sat_baseline,

wemwbs,

diaries_completed,

self_reported_weekly_play,

self_reported_played_24h,

displaced_core_domain,

bpnsfs_1,

bpnsfs_2,

bpnsfs_3,

bpnsfs_4,

bpnsfs_5,

bpnsfs_6,

bangs_1,

bangs_2,

bangs_3,

bangs_4,

bangs_5,

bangs_6

) |>

pivot_wider(

id_cols = c(

pid,

age,

gender,

edu_level,

employment,

marital_status,

life_sat_baseline,

wemwbs,

diaries_completed,

self_reported_weekly_play

),

names_from = wave,

values_from = c(

self_reported_played_24h,

displaced_core_domain,

bpnsfs_1,

bpnsfs_2,

bpnsfs_3,

bpnsfs_4,

bpnsfs_5,

bpnsfs_6,

bangs_1,

bangs_2,

bangs_3,

bangs_4,

bangs_5,

bangs_6

),

names_sep = "_w"

)

# Set up imputation model

M_IMPUTATIONS <- 27

# Set up imputation methods

# Self-reported play: use logistic regression (binary Yes/No)

played_vars <- names(surveys_wide)[grepl(

"^self_reported_played_24h_w",

names(surveys_wide)

)]

# BPNSFS items: always impute when missing (global need satisfaction)

bpnsfs_vars <- names(surveys_wide)[grepl(

"^bpnsfs_[1-6]_w",

names(surveys_wide)

)]

# BANGS items: only impute conditionally (see where matrix below)

bangs_vars <- names(surveys_wide)[grepl("^bangs_[1-6]_w", names(surveys_wide))]

# Displaced core domain: always impute when missing

displaced_vars <- names(surveys_wide)[grepl(

"^displaced_core_domain_w",

names(surveys_wide)

)]

methods <- setNames(rep("", ncol(surveys_wide)), names(surveys_wide))

methods[played_vars] <- "logreg" # Binary outcome

methods[c(bpnsfs_vars, bangs_vars, displaced_vars)] <- "pmm"

# Explicitly exclude person-level variables from imputation

# These either have no within-person variance or are complete

person_level_vars <- c(

"pid",

"age",

"gender",

"edu_level",

"employment",

"marital_status",

"life_sat_baseline",

"wemwbs",

"diaries_completed",

"self_reported_weekly_play"

)

methods[person_level_vars] <- ""

# Create WHERE matrix for conditional imputation

# Default: impute all missing cells

where_matrix <- is.na(surveys_wide)

# For BANGS items: only impute when person reported playing (or play status unknown)

# Do NOT impute when self_reported_played_24h == "No"

for (bangs_var in bangs_vars) {

wave_num <- str_extract(bangs_var, "\\d+$")

played_var <- paste0("self_reported_played_24h_w", wave_num)

if (played_var %in% names(surveys_wide)) {

# Set to FALSE (don't impute) where played=="No" AND bangs is missing

no_play <- surveys_wide[[played_var]] == "No" &

is.na(surveys_wide[[bangs_var]])

where_matrix[no_play, bangs_var] <- FALSE

}

}

message(glue("Conditional imputation setup:"))

message(glue(" BANGS items: only impute when played=Yes or played=NA"))

message(glue(" BPNSFS items: always impute"))

message(glue(" Self-reported play: impute when missing"))

# SPEED OPTIMIZATION: Use quickpred() to create sparse predictor matrix

pred <- quickpred(

surveys_wide,

mincor = 0.3,

minpuc = 0.3,

include = c(

"age",

"wemwbs",

"self_reported_weekly_play"

),

exclude = c(

"pid",

"gender",

"edu_level",

"employment",

"marital_status",

"diaries_completed"

)

)

# DIAGNOSTIC: Check for collinearity issues in analytical sample

message(glue("\n=== COLLINEARITY DIAGNOSTICS ==="))

# Check for variables with zero variance

zero_var <- sapply(surveys_wide, function(x) {

if (is.numeric(x)) {

v <- var(x, na.rm = TRUE)

!is.na(v) && v < 1e-10

} else {

FALSE

}

})

if (any(zero_var, na.rm = TRUE)) {

message(glue(

"⚠️ Variables with zero variance: {paste(names(which(zero_var)), collapse=', ')}"

))

}

# Check for perfect/near-perfect correlations among items to be imputed

items_to_check <- c(

grep("^bpnsfs_[1-6]_w", names(surveys_wide), value = TRUE),

grep("^bangs_[1-6]_w", names(surveys_wide), value = TRUE)

)

if (length(items_to_check) > 1) {

cor_mat <- cor(surveys_wide[, items_to_check], use = "pairwise.complete.obs")

diag(cor_mat) <- 0 # Ignore diagonal

high_cor <- which(abs(cor_mat) > 0.9999, arr.ind = TRUE)

if (nrow(high_cor) > 0) {

message(glue("⚠️ PERFECT CORRELATIONS DETECTED:"))

for (i in 1:min(nrow(high_cor), 10)) {

# Show first 10

var1 <- items_to_check[high_cor[i, 1]]

var2 <- items_to_check[high_cor[i, 2]]

r <- cor_mat[high_cor[i, 1], high_cor[i, 2]]

message(glue(" {var1} <-> {var2}: r = {round(r, 6)}"))

}

} else {

message(glue(

"✓ No perfect correlations detected (max r = {round(max(abs(cor_mat), na.rm=TRUE), 3)})"

))

}

}

# Report predictor matrix density

pred_density <- 100 * mean(pred != 0)

message(glue("Predictor matrix density: {round(pred_density, 1)}%"))

# Check where matrix for potential issues

n_cells_to_impute <- sum(where_matrix)

n_total_cells <- prod(dim(where_matrix))

pct_to_impute <- 100 * n_cells_to_impute / n_total_cells

message(glue(

"Cells to impute: {n_cells_to_impute}/{n_total_cells} ({round(pct_to_impute, 1)}%)"

))

# Check for variables with extreme missingness

vars_to_impute <- names(surveys_wide)[methods != ""]

miss_pct <- sapply(surveys_wide[vars_to_impute], function(x) {

100 * mean(is.na(x))

})

high_miss <- miss_pct > 80

if (any(high_miss)) {

message(glue("⚠️ Variables with >80% missing:"))

for (v in names(which(high_miss))) {

message(glue(" {v}: {round(miss_pct[v], 1)}%"))

}

}

message(glue("=== END DIAGNOSTICS ===\n"))

# Check if imputation already exists

imp_file <- "data/imputation_analytical.csv.gz"

if (file.exists(imp_file)) {

message(glue("Loading existing imputation from {imp_file}"))

imp_long <- read_csv(imp_file, show_col_types = FALSE)

# Convert back to mids object

imp <- as.mids(imp_long)

message(glue("Loaded imputation object (M={imp$m})"))

} else {

message(glue("No existing imputation found - running MICE"))

message(glue(

"Running MICE: M={M_IMPUTATIONS}, N={nrow(surveys_wide)}, started {Sys.time()}"

))

imp <- futuremice(

data = surveys_wide,

m = M_IMPUTATIONS,

method = methods,

predictorMatrix = pred,

where = where_matrix,

maxit = 5,

n.core = parallel::detectCores() - 1,

parallelseed = 8675309

)

message(glue("Completed {Sys.time()}"))

# Save imputation results as compressed CSV

imp_long <- complete(imp, action = "long", include = TRUE)

write_csv(imp_long, imp_file)

message(glue("Saved imputation to {imp_file}"))

# Diagnostic checks (only after fresh imputation)

if (nrow(imp$loggedEvents) > 0) {

message(glue(

"Warning: {nrow(imp$loggedEvents)} logged events during imputation"

))

print(head(imp$loggedEvents))

} else {

message("No logged events - imputation completed without issues")

}

}

# Quantify missingness for inline reporting

overall_miss_pct <- number(pct_to_impute, 0.1)

# Number of participants with any missing data

n_ppl_with_missing <- surveys_wide |>

select(all_of(vars_to_impute)) |>

mutate(any_missing = rowSums(is.na(pick(everything()))) > 0) |>

pull(any_missing) |>

sum()

pct_ppl_with_missing <- number(

100 * n_ppl_with_missing / nrow(surveys_wide),

0.1

)

```

```{r}

#| label: post-imputation-centering

#| code-summary: "Show code (Calculate composites and within/between-person centering)"

#| output: false

# Post-imputation centering

m_imputations <- M_IMPUTATIONS

message(glue("=== Post-imputation processing (M={m_imputations}) ==="))

# Convert to long format and calculate composite scores from imputed items

daily_imputed <- complete(imp, action = "long", include = TRUE) |>

pivot_longer(

cols = -c(

.imp,

.id,

pid,

age,

gender,

edu_level,

employment,

marital_status,

life_sat_baseline,

wemwbs,

diaries_completed,

self_reported_weekly_play

),

names_to = c(".value", "wave"),

names_pattern = "(.+)_w(.+)"

) |>

mutate(wave = as.factor(wave)) |>

# Calculate composite scores from imputed individual items

mutate(

global_ns = rowMeans(pick(bpnsfs_1, bpnsfs_3, bpnsfs_5), na.rm = TRUE),

global_nf = rowMeans(pick(bpnsfs_2, bpnsfs_4, bpnsfs_6), na.rm = TRUE),

game_ns = rowMeans(pick(bangs_1, bangs_3, bangs_5), na.rm = TRUE),

game_nf = rowMeans(pick(bangs_2, bangs_4, bangs_6), na.rm = TRUE)

) |>

# Rejoin telemetry variables (no imputation needed)

left_join(telemetry_vars, by = c("pid", "wave")) |>

# Calculate person means within each imputation

group_by(.imp, pid) |>

mutate(

global_ns_pm = mean(global_ns, na.rm = TRUE),

global_nf_pm = mean(global_nf, na.rm = TRUE),

game_ns_pm = mean(game_ns, na.rm = TRUE),

game_nf_pm = mean(game_nf, na.rm = TRUE)

) |>

ungroup() |>

# Calculate grand means and centered values within each imputation

group_by(.imp) |>

mutate(

global_ns_gm = mean(global_ns_pm, na.rm = TRUE),

global_nf_gm = mean(global_nf_pm, na.rm = TRUE),

game_ns_gm = mean(game_ns_pm, na.rm = TRUE),

game_nf_gm = mean(game_nf_pm, na.rm = TRUE),

# Within-person centered (deviation from person mean)

global_ns_cw = global_ns - global_ns_pm,

global_nf_cw = global_nf - global_nf_pm,

game_ns_cw = game_ns - game_ns_pm,

game_nf_cw = game_nf - game_nf_pm,

# Between-person centered (person mean - grand mean)

global_ns_cb = global_ns_pm - global_ns_gm,

global_nf_cb = global_nf_pm - global_nf_gm,

game_ns_cb = game_ns_pm - game_ns_gm,

game_nf_cb = game_nf_pm - game_nf_gm

) |>

ungroup()

# Main analysis dataset: use .imp > 0 (imputed datasets)

# .imp == 0 contains the original incomplete data

dat <- daily_imputed |> filter(.imp > 0)

message(glue(

"Main analysis dataset: {n_distinct(dat$pid)} participants, {nrow(dat)} observations"

))

# Create unique row ID for long format (needed for as.mids conversion)

daily_imputed <- daily_imputed |>

group_by(.imp) |>

mutate(.id = row_number()) |>

ungroup()

# Helper object for mice::with() and pool() if needed

imp_mids <- as.mids(daily_imputed)

```

---

abstract: |

Psychological theories of media use often assume that subjective motivation affects observable behavior. Using video games as a test case, we examine this assumption by pairing repeated self-reports of motivation with objective digital trace data at scale. Across two datasets comprising tens of thousands of hours of gaming behavior, we test predictions derived from self-determination theory and the Basic Needs in Games (BANG) model, which posit that autonomy, competence, and relatedness experiences drive engagement. Study 1 (preregistered) analyzes 11k daily observations from 555 U.S. players with 30 days of multi-platform digital trace data. Study 2 (exploratory) examines 102k sessions from 9k PowerWash Simulator players, linking in-game experience prompts to behavioral logs. In both studies, need satisfaction was robustly associated with subjective states but showed weak or null associations with short-term gaming behavior, including subsequent play, session length, and return latency, across extensive preregistered and robustness analyses. These findings reveal a substantial motivation--behavior gap and suggest that SDT-based accounts may overestimate the role of need satisfaction in explaining when or how much people play. Data and code are available under a {{< meta data-license >}} license at {{< meta data-url >}}.

---

# Introduction

Digital technology use constitutes one of the domains in which behavior is most directly observable. Digital media (1) are widely used---upwards of half of adults and nearly all children in the UK and US regularly play video games [@EntertainmentSoftwareAssociation20242024; @Ofcom2023Online]; (2) generate digital trace data (automatically logged histories of user behavior), and (3) affect the health of at least some users in important ways, both for better and for worse [@GranicEtAl2014benefits; @BallouEtAl2024How]. These putative impacts of media use behavior on health are of interest to users, industry professionals, policymakers, clinicians, and families, but research to date has had limited success supporting these groups [@Ballou2023Manifesto]. Among other difficulties, progress has been stymied by three challenges: (1) accessing granular behavioral data, (2) measuring mental health with sufficient temporal detail, and (3) aligning theory with growing evidence that effects relate primarily to the quality, rather than quantity, of play [@BallouDeterding2024Basic; @Buchi2024Digital; @Orben2022Digital].

Digital trace data—histories of user actions generated when interacting with games—addresses several of these challenges. Compared to self-report, trace data provides greater detail about what, when and how much people play while alleviating concerns about recall biases [@ErnalaEtAl2020How; @KahnEtAl2014why; @ParryEtAl2021systematic]. However, three key limitations remain. First, while gaming companies collect player data at scale [@El-NasrEtAl2021Game], these data are rarely accessible to independent researchers. Where access has been negotiated or engineered via open source methods, it typically covers just one game or platform. While single-game studies can offer high depth and detail, they are potentially a small part of a person's gaming diet---engaged UK and US players use an average of 2.8 platforms [@BallouEtAl2025Perceived]. Given this, we advocate for openly available digital trace data spanning both single-game studies and multi-platform data access to understand holistic effects.

Second, while trace data is often richly longitudinal, it has to date only been paired with wellbeing surveys consisting of either a single measurement wave [@BallouEtAl2025Perceived; @JohannesEtAl2021Video] or waves separated by multiple weeks [@LarrieuEtAl2023How; @VuorreEtAl2022Time]. Early evidence suggests gaming effects may materialize and dissipate within 6 hours [@VuorreEtAl2023Affective; @BallouEtAl2025Perceived], and subjective wellbeing varies substantially across a day [@LuhmannEtAl2021Subjective]. Experience sampling and daily diary methods, embraced in social media research [@AalbersEtAl2021Caught; @SiebersEtAl2021Explaining], are needed to capture nuanced, short-lived effects and to better differentiate within- and between-person relationships [@JohannesEtAl2024How].

Third, effects of gaming are likely nuanced and contextual, but existing research lacks strong theoretical frameworks to guide investigation. Studies using digital trace data have ruled out simple playtime--wellbeing relationships [@BallouEtAl2024Registered; @JohannesEtAl2021Video; @VuorreEtAl2022Time; @LarrieuEtAl2023How; @BallouEtAl2025Perceived], supporting calls to move beyond quantity-focused approaches [@BallouEtAl2025How]. To do so, we need theory-driven predictions about how psychological states relate to gaming behavior itself—what motivates people to play in the first place, and how daily fluctuations in wellbeing shape engagement patterns. Self-Determination Theory [SDT, @Ryan2023Oxforda] offers such a framework, proposing that satisfaction of basic psychological needs---autonomy, competence, and relatedness---drives motivated behavior including media use. Understanding whether and when people choose to play games may depend critically on their experiences of needs satisfaction in daily life, yet the majority of studies that have tested these behavioral predictions did so without access to intensive longitudinal data [e.g., @BenderGentile2019internet; @AllenAnderson2018satisfaction].

Together, these gaps point to clear next steps: collect digital trace data in the form of both comprehensive multi-platform records and single-game, granular interactions; pair it with high-resolution, repeated wellbeing measurements; and use this data for theory-driven investigations of play quality and context. These are the aims of the present study.

# Self-determination theory

Self-determination theory [@RyanDeci2017Selfdetermination] proposes three innate and universal psychological needs: the need for autonomy (to feel in control over one’s life and volitional in one’s actions), competence (to act effectively and exert mastery in the world), and relatedness (to feel that one is valued by others and values them in return). These basic psychological needs are theorized to be direct antecedents of intrinsic and autonomous motivation, as well as vital nutriments required for a person to live a fully functional life [@Ryan2023Oxford]. Across the environments we inhabit and activities we perform, these needs can be either satisfied or frustrated [@VansteenkisteEtAl2020basic].

There has been substantial research into how games and other entertainment media can support basic psychological needs [@PrzybylskiEtAl2010motivational; @TyackMekler2020Selfdetermination]. Games are adept at satisfying all three basic needs; games that better satisfy needs are more engaging; and having one’s needs satisfied during gaming is associated with better mental health outcomes during and after play [@ReerQuandt2020Digital; @TyackMekler2020Selfdetermination; @VellaJohnson2012Flourishing]. A large body of work supports that basic need satisfaction supports intrinsic motivation, and intrinsic motivation translates to higher self-reported behavioral engagement [@RyanEtAl2022We].

## The Basic Needs in Games (BANG) Model

The Basic Needs in Games (BANG) model of video game play and mental health [@BallouDeterding2024Basic] builds upon the core SDT principle that any action’s impact on mental health is mediated by the extent to which it satisfies or frustrates basic psychological needs. By differentiating between playtime and quality of play, BANG seeks to explain seemingly conflicting earlier findings that playtime itself is largely unrelated to wellbeing, but that some players do experience meaningful benefits or harms from their video game play.

To date, however, BANG remains largely untested. Hence, the goal of this study is to test several key BANG hypotheses. We label the hypotheses of the current study in numerical order (e.g., H1) but also provide the numbered label from the original paper (e.g., B6) for clarity of potential falsification.

### The Relationship Between Basic Needs in Games and Global Basic Needs (H1)

Following the hierarchical model of intrinsic and extrinsic motivation [@Vallerand1997Hierarchical], BANG conceives of basic needs as operating at three levels of generality: situational (a particular gaming session), contextual (gaming as a whole), and global (one’s life in general). Experiences at lower levels of generality feed into and co-constitute higher levels---experiences with games are one (greater or lesser) element of one's life overall.

A positive relationship between need satisfaction in games and overall need satisfaction is well-established in prior literature [@AllenAnderson2018satisfaction; @BallouEtAl2024Basic; @BradtEtAl2024Are]. BANG (B6) formalizes this relationship, proposing that need satisfaction experienced during gaming sessions contributes to overall need satisfaction in life. Thus, BANG hypothesizes:

*H1. When individuals’ in-game needs are better satisfied, they report greater overall need satisfaction.*

### Expectations (H2a)

Early articulations of SDT propose that goal or activity selection is (intrinsically) motivated by the 'awareness of potential satisfaction' of basic psychological needs or 'expectations about the satisfaction of those [salient] motives' [@DeciRyan1985Intrinsic, p. 231--239]. Need-related outcome expectations are closely related to intrinsic motivation [perhaps even forming part of the computational machinery underlying motivation @MurayamaJach2023critique], and the behavioral product of these expectations is therefore greater behavioral engagement.

Expectations have, perhaps surprisingly, not featured prominently in subsequent empirical work; most gaming and media use-related SDT work focuses on need satisfaction or frustration as the experiential consequence of media consumption [@BallouDeterding2023Basic]. Need experiences can explain loops of self-sustaining activity, but cannot explain initial selective exposure to gaming where the activity has not commenced.

BANG proposes that experiences of need satisfaction during a particular gaming session positively affect players' expectations for future need satisfaction with the current game, similar games, and gaming as a whole. This prediction is grounded in reports that expected need frustration is reported to modulate both initial and continued gaming exposure [@BallouDeterding2023Just], and in broader findings that outcome expectations are a strong predictor of continued media engagement [@ChangEtAl2014effects; @KocakAlanEtAl2022Replaying; @LaroseEtAl2001Understanding]. From BANG (B8), we therefore derive the following hypothesis:

*H2a. When individuals’ in-game need satisfaction is higher, they are more likely to play video games in the 24-hour period after survey completion.*

### Compensation (H2b)

SDT predicts that (global) need frustration results in compensatory behavior---people attempt to replenish needs that are not being met by altering their behavior [e.g., @SheldonEtAl2011twoprocess]. The potent need satisfaction offered by games constitute one way for people to compensate, particularly those who are highly engaged with gaming and have high gaming literacy [@BallouEtAl2022People]. The so-called "need density hypothesis" predicts that problematic or disordered gaming is most likely to occur when a person experiences high need satisfaction in games and high need frustration in other life domains [@RigbyRyan2011glued]. In other words, problematic gaming is theorized to occur, at least for some, due to a negative feedback loop whereby compensation occurs and becomes maladaptive such that the person's other compensatory tools besides gaming are progressively diminished. Several studies have found support for the need density hypothesis account [@BradtEtAl2024Are; @AllenAnderson2018satisfaction; @MillsEtAl2018exploring].

BANG operationalizes this compensatory play via intrinsic motivation. Frustrated needs in one’s life in general make opportunities to fulfill those needs more salient, which---all else equal---manifests phenomenologically as a stronger preference towards activities that satisfy the frustrated need(s). Given this, we hypothesize in the context of our sample of moderately to highly-engaged video game players (derived from BANG Hypothesis 9):

*H2b. When individuals’ global need frustration is higher, they are more likely to play video games in the 24-hour period after survey completion.*

### Displacement (H3)

Playtime, BANG argues, only becomes problematic when it displaces other activities essential to the maintenance of need satisfaction in life overall. Commonly proposed problematic displacements are work/school responsibilities [@DrummondSauer2020Timesplitters], personal relationships [@DomahidiEtAl2018Longitudinal], and physical health or sleep [@KingEtAl2013impact]. Displacing these essential activities would thereby reduce global need satisfaction. Thus, BANG (B5) hypothesizes:

*H3. When a person’s most recent gaming is reported to displace a core life domain (work/school, social engagements, sleep/eating/fitness, or caretaking), their global need satisfaction is lower.*

# Study 1: Preregistered Analysis of Multi-Platform Digital Trace Data and Daily Surveys

Study 1 was pre-registered on the Open Science Framework (OSF) in the form of a Stage 1 Programmatic Registered Report [@BallouEtAl2025Psychological]. The present report is the first of three preregistered Stage 2 outputs; two other components, focused on sleep and genres, respectively, are forthcoming.

All data and analysis code are available on Zenodo ({{< meta data-url >}}).

## Study 1 Method

::: {.place arguments='top, scope: "parent", float: true'}

```{r}

#| label: tbl-platforms

#| code-summary: "Show code (Create platform details table)"

#| tbl-cap: "Platform Details"

tibble(

Platform = c(

"Nintendo",

"Xbox",

"Steam"

),

`Data Source` = c(

"Data-sharing agreements with Nintendo of America",

"Data-sharing agreement with Microsoft",

"Custom web app (Gameplay.Science)"

),

`Account Linking Process` = c(

"Participants shared an identifier contained within a QR code on the Nintendo web interface. Nintendo of America uses this identifier to retrieve gameplay data and share it with the research team.",

"Participants consented to data sharing by opting in to the study on Xbox Insiders with their Xbox account. Microsoft retrieved and shared pseudonymized gameplay data for all consented accounts.",

"Using a web app we developed (https://gameplay.science), participants consented to have their gameplay data monitored for the duration of the study. Authentication uses the official Steam API (OpenID)."

),

`Type of Data Collected` = c(

"Session records (what game was played, at what time, for how long) for first-party games only (games published in whole or in part by Nintendo).",

"Session records (what game was played, at what time, for how long). Game titles were replaced with a random persistent identifier, but genre(s) and age ratings are shared.",

"Hourly aggregates per game (every hour, the total time spent playing each game during the previous hour)"

)

) |>

tt(

escape = TRUE,

notes = list(

"a" = list(

i = 1,

j = 3,

text = "See https://accounts.nintendo.com/qrcode."

),

"b" = list(

i = 1,

j = 4,

text = "Nintendo-published games accounted for 63% of Switch playtime in our sample."

),

"c" = list(

i = 2,

j = 3,

text = "See https://support.xbox.com/en-US/help/account-profile/manage-account/guide-to-insider-program."

)

)

) |>

style_tt(fontsize = 0.7) |>

style_tt(i = 0, bold = TRUE, align = "c")

```

:::

### Design

The data for this study comprise a subset of the data from the Open Play study [@BallouEtAl2025Open], version 1.0.0. In the Open Play study, participants provided access to automated records of their gaming history on one or more platforms (Xbox, Steam, Nintendo Switch, iOS, Android) and completed an intake survey followed by a 30-day daily diary study. The intake survey included demographic questions and baseline measures of wellbeing. Daily data was collected between Oct 2024 and Aug 2025.

Participants were recruited in collaboration with two panel providers, Prolific and PureProfile. Participants were eligible if they were aged 18 or older, resided in the United States, self-reported playing video games for at least 1 hour per week, of which 50% must take place on eligible platforms (Nintendo, Xbox, and Steam), and successfully linked at least one gaming account on Xbox, Steam, and/or Nintendo Switch with validated recent digital trace data. iOS and Android data were collected but are not used in this analysis. For full details of the recruitment procedures and study methodology, see [@BallouEtAl2025Open].

### Ethics

This study received ethical approval from the Social Sciences and Humanities Inter-Divisional Research Ethics Committee at the University of Oxford (OII_CIA_23_107). All participants provided informed consent at the start of the study, including consent to their data being shared openly for reanalysis.

Participants were paid at an average rate of £12/hour, equating to: £0.20 for a 1-minute screening, £2 for the 10-minute intake survey (plus £5 for linking at least one account with recent data), £0.80 for each 4-minute daily survey. Participants received a £10 bonus payment for completing at least 24 out of 30 daily surveys.

### Sample Size Justification

As specified in the Stage 1 Registered Report, sample size was determined by feasibility/resources rather than a formal power analysis, with an initial target of recruiting up to 1,000 U.S. participants into the diary component, with ~30% expected attrition. Sensitivity analyses showed that this sample size was sufficient to estimate target associations with moderate precision (95% CI spanning .13 scale points on a 7-point scale) (https://github.com/digital-wellbeing/platform-study-rr).

### Participant Inclusion/Exclusion Criteria

All eligibility and exclusion criteria were specified prior to data analysis in Stage 1 and/or documented as deviations in @tbl-deviations. Participants were eligible if they were aged 18+, resided in the U.S., reported playing video games at least 1 hour/week, reported that a substantial share of their gaming occurred on eligible platforms (Nintendo/Xbox/Steam), and successfully linked at least one eligible account with validated recent digital trace data. As documented, two eligibility thresholds were adjusted for feasibility relative to Stage 1: the platform-share requirement was lowered (75% → 50%), and the recent valid trace requirement was extended (7 days → 14 days).

Participants who completed fewer than 15 daily surveys were excluded from the primary (imputed) analyses. The ≥15-survey threshold aligns with the Stage 1 criterion of avoiding analyses that would require imputing more data than collected (≥50%).

### Participants

```{r}

#| label: participants

#| code-summary: "Show code (Calculate participant statistics and response rates)"

# Analytic sample (≥15 completions, used in imputed analyses)

analytic_pids <- dat |>

filter(.imp == 1) |>

distinct(pid) |>

pull(pid)

# Prepare participant data with collapsed categories

participant_data <- intake |>

filter(pid %in% c(full_eligible_pids, analytic_pids)) |>

mutate(

# Collapse gender to main categories

gender = ifelse(

gender %in% c("Man", "Woman"),

gender,

"Other gender identity"

),

# Collapse ethnicity to main categories

ethnicity_collapsed = case_when(

str_detect(ethnicity, "White") ~ "White",

str_detect(

ethnicity,

"Black|African American|African, Caribbean"

) ~ "Black/African American",

str_detect(ethnicity, "Asian") ~ "Asian",

str_detect(ethnicity, "Two or More|Mixed") ~ "Multiracial",

is.na(ethnicity) ~ NA_character_,

TRUE ~ "Other"

),

# Collapse education to main levels

education_collapsed = case_when(

str_detect(

edu_level,

"Less than|No formal|Primary"

) ~ "Less than high school",

str_detect(

edu_level,

"High school|Secondary School|GCSE|Some college|Some University"

) ~ "High school or some college",

str_detect(edu_level, "Associate") ~ "Associate degree",

str_detect(

edu_level,

"Bachelor|University Bachelors"

) ~ "Bachelor's degree",

str_detect(

edu_level,

"Master|Graduate|professional degree|doctoral|post-graduate"

) ~ "Graduate degree",

is.na(edu_level) ~ NA_character_,

TRUE ~ "Other"

),

# Assign to sample

sample = case_when(

pid %in% analytic_pids ~ "Analytic sample",

TRUE ~ "Full eligible sample"

)

) |>

select(

pid,

sample,

age,

gender,

ethnicity_collapsed,

education_collapsed,

self_reported_weekly_play,

diaries_completed

)

# Sample sizes for text

n_analytic <- sum(participant_data$sample == "Analytic sample")

n_full <- sum(

participant_data$sample %in% c("Analytic sample", "Full eligible sample")

)

# Gender summary for analytic sample (for text)

gender_analytic <- participant_data |>

filter(sample == "Analytic sample") |>

count(gender) |>

mutate(pct = 100 * n / sum(n))

pct_men <- gender_analytic |> filter(gender == "Man") |> pull(pct) |> round(1)

pct_women <- gender_analytic |>

filter(gender == "Woman") |>

pull(pct) |>

round(1)

pct_other <- gender_analytic |>

filter(gender == "Other gender identity") |>

pull(pct) |>

round(1)

gender_summary <- glue(

"{pct_men}% men, {pct_women}% women, {pct_other}% other gender identities"

)

```

```{r}

#| label: tbl-participants

#| tbl-cap: "Participant characteristics by sample"

#| code-summary: "Show code (Generate participant demographics table)"

# Build table using map

summary_table <- bind_rows(

# Sample sizes

tibble(

Characteristic = "**N**",

`Full sample` = as.character(n_full),

`Analytic sample` = as.character(n_analytic)

),

# Age

tibble(

Characteristic = "Age (years)",

`Full sample` = format_mean_sd(

participant_data$age[participant_data$sample == "Full eligible sample"]

),

`Analytic sample` = format_mean_sd(

participant_data$age[participant_data$sample == "Analytic sample"]

)

),

# Categorical demographics

create_categorical_section(

participant_data,

"gender",

"Gender",

c("Man", "Woman", "Other gender identity")

),

create_categorical_section(

participant_data,

"ethnicity_collapsed",

"Ethnicity",

c("White", "Black/African American", "Asian", "Multiracial", "Other")

),

create_categorical_section(

participant_data,

"education_collapsed",

"Education",

c(

"Less than high school",

"High school or some college",

"Associate degree",

"Bachelor's degree",

"Graduate degree"

)

),

# Gaming behavior section

tibble(

Characteristic = "**Gaming behavior**",

`Full sample` = "",

`Analytic sample` = ""

),

tibble(

Characteristic = "Weekly play (hours)",

`Full sample` = format_mean_sd(

participant_data$self_reported_weekly_play[

participant_data$sample == "Full eligible sample"

] /

60

),

`Analytic sample` = format_mean_sd(

participant_data$self_reported_weekly_play[

participant_data$sample == "Analytic sample"

] /

60

)

),

# Study engagement section

tibble(

Characteristic = "**Study engagement**",

`Full sample` = "",

`Analytic sample` = ""

),

tibble(

Characteristic = "Surveys completed",

`Full sample` = format_mean_sd(

participant_data$diaries_completed[

participant_data$sample == "Full eligible sample"

]

),

`Analytic sample` = format_mean_sd(

participant_data$diaries_completed[

participant_data$sample == "Analytic sample"

]

)

)

)

# Identify rows for styling

header_rows <- which(

str_detect(summary_table$Characteristic, "^\\*\\*") |

(summary_table$`Full sample` == "" & summary_table$`Analytic sample` == "")

)

indent_rows <- which(str_detect(summary_table$Characteristic, "^ "))

# Create table

summary_table |>

tt(

notes = "Values are M (SD) or N (%). Ethnicity and education categories collapsed to main levels for clarity."

) |>

format_tt(j = 1, markdown = TRUE) |>

style_tt(i = header_rows, bold = TRUE) |>

format_tt(i = header_rows, j = 1, fn = function(x) {

str_remove_all(x, "\\*\\*")

}) |>

style_tt(i = indent_rows, j = 1, indent = 1) |>

format_tt(i = indent_rows, j = 1, fn = function(x) str_trim(x)) |>

style_tt(fontsize = 0.7) |>

style_tt(i = 0, bold = TRUE)

```

The current sample included `r n_analytic` participants (`r gender_summary`) who completed at least 15 daily surveys and were included in the main imputed analyses. The full eligible sample comprised `r n_full` participants who completed at least 1 survey and were included in complete case sensitivity analyses.

::: {.place arguments='top, scope: "parent", float: true'}

```{r}

#| label: fig-descriptives

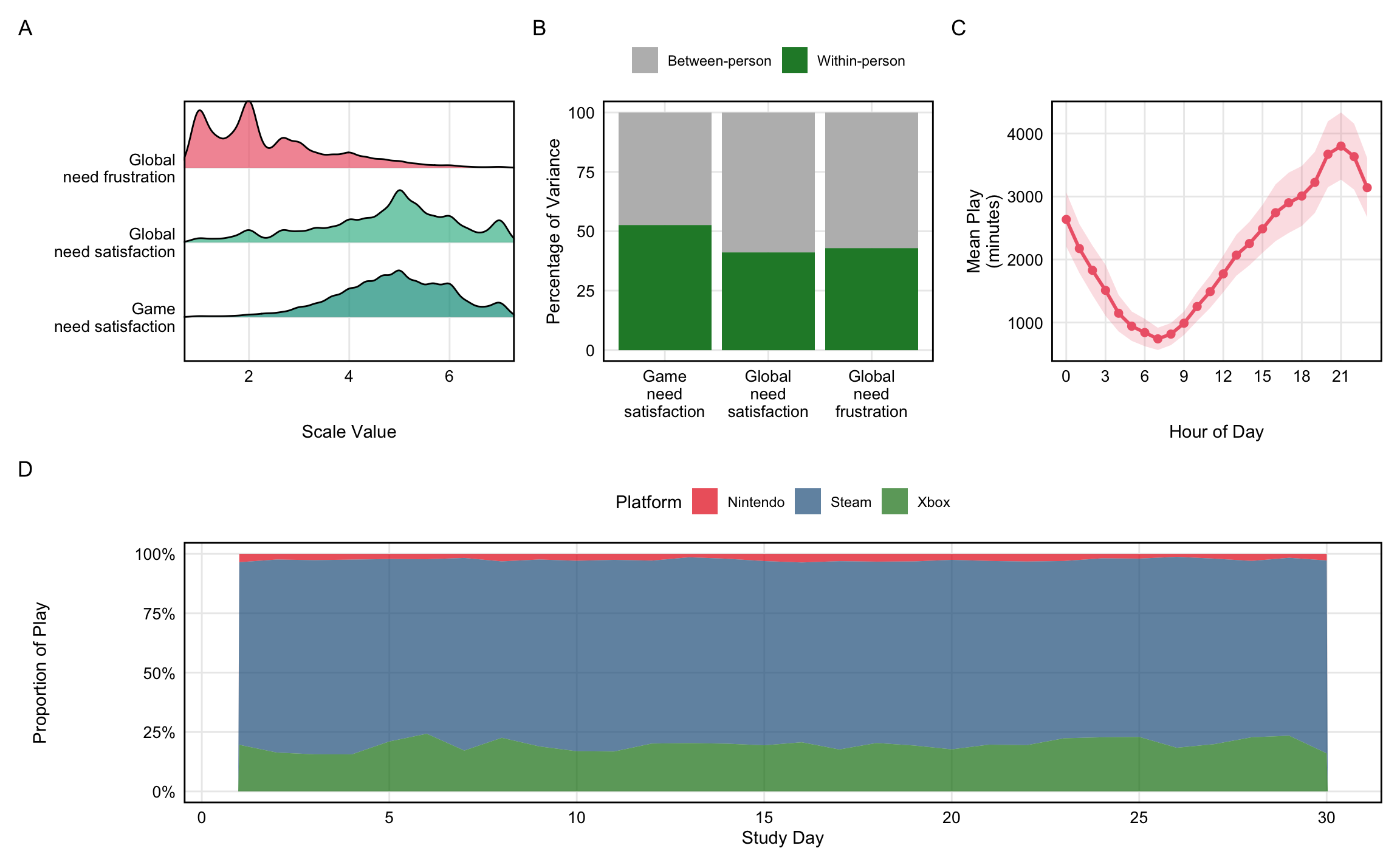

#| fig-cap: "Descriptive statistics for key variables. (A) Distributions of need satisfaction and frustration variables. (B) Variance decomposition showing proportion of variance at within-person vs between-person levels. (C) Play volume by hour of day (local time). (D) Proportion of play on each platform across study days 1-30."

#| code-summary: "Show code (Create main descriptive figure)"

#| fig-height: 8

#| fig-width: 12

# Panel A: Density ridges for key variables

dat_desc <- dat |> filter(.imp == 1)

panel_a <- dat_desc |>

select(global_ns, global_nf, game_ns) |>

pivot_longer(everything(), names_to = "variable", values_to = "value") |>

mutate(

variable = factor(

variable,

levels = c("game_ns", "global_ns", "global_nf"),

labels = c(

sub(" ", "\n", labels["game_ns"]),

sub(" ", "\n", labels["global_ns"]),

sub(" ", "\n", labels["global_nf"])

)

)

) |>

ggplot(aes(x = value, y = variable, fill = variable)) +

geom_density_ridges(alpha = 0.7, scale = 0.9, na.rm = TRUE) +

scale_fill_manual(

values = setNames(

c(colors$game_ns, colors$global_ns, colors$global_nf),

c(

sub(" ", "\n", labels["game_ns"]),

sub(" ", "\n", labels["global_ns"]),

sub(" ", "\n", labels["global_nf"])

)

)

) +

labs(x = "Scale Value", y = NULL) +

coord_cartesian(xlim = c(1, 7)) +

theme(legend.position = "none")

# Panel B: Variance decomposition

panel_b <- dat_desc |>

select(pid, global_ns, global_nf, game_ns) |>

pivot_longer(

c(global_ns, global_nf, game_ns),

names_to = "variable",

values_to = "value"

) |>

group_by(variable) |>

summarise(

var_total = var(value, na.rm = TRUE),

var_between = var(tapply(value, pid, mean, na.rm = TRUE), na.rm = TRUE),

.groups = "drop"

) |>

mutate(

pct_between = 100 * var_between / var_total,

pct_within = 100 * (var_total - var_between) / var_total,

variable = factor(

variable,

levels = c("game_ns", "global_ns", "global_nf"),

labels = c(

gsub(" ", "\n", labels["game_ns"]),

gsub(" ", "\n", labels["global_ns"]),

gsub(" ", "\n", labels["global_nf"])

)

)

) |>

pivot_longer(

c(pct_within, pct_between),

names_to = "component",

values_to = "percentage"

) |>

mutate(

component = factor(

component,

levels = c("pct_between", "pct_within"),

labels = c(labels["Between-person"], labels["Within-person"])

)

) |>

ggplot(aes(x = variable, y = percentage, fill = component)) +

geom_col(position = "stack") +

scale_fill_manual(

values = c(

"Between-person" = colors$between,

"Within-person" = colors$within

)

) +

labs(x = NULL, y = "Percentage of Variance", fill = NULL) +

theme(legend.position = "top")

# Panel C: Hour of day patterns

panel_c <- hourly_telemetry |>

filter(pid %in% unique(dat_desc$pid)) |>

mutate(hour = hour(hour_start_local)) |>

group_by(pid, hour) |>

summarise(total_minutes = sum(minutes, na.rm = TRUE), .groups = "drop") |>

group_by(hour) |>

summarise(

mean_minutes = mean(total_minutes, na.rm = TRUE),

se = sd(total_minutes, na.rm = TRUE) / sqrt(n()),

.groups = "drop"

) |>

ggplot(aes(x = hour, y = mean_minutes)) +

geom_ribbon(

aes(ymin = mean_minutes - 1.96 * se, ymax = mean_minutes + 1.96 * se),

fill = colors$global_nf,

alpha = 0.2

) +

geom_line(color = colors$global_nf, linewidth = 1) +

geom_point(color = colors$global_nf, size = 2) +

labs(x = "Hour of Day", y = "Mean Play\n(minutes)") +

scale_x_continuous(breaks = seq(0, 23, 3))

# Panel D: Platform usage over study days

panel_d <- daily_telemetry |>

mutate(day_local_date = as.Date(day_local)) |>

inner_join(

dat_desc |>

select(pid, date, wave) |>

mutate(

date_only = as.Date(date),

wave_num = as.numeric(as.character(wave))

) |>

filter(wave_num <= 30),

by = c("pid", "day_local_date" = "date_only"),

relationship = "many-to-many"

) |>

filter(minutes > 0) |>

mutate(

platform = case_when(

platform == "nintendo" ~ "Nintendo",

platform == "xbox" ~ "Xbox",

platform == "steam" ~ "Steam",

TRUE ~ platform

)

) |>

group_by(wave_num, platform) |>

summarise(total_minutes = sum(minutes), .groups = "drop") |>

group_by(wave_num) |>

mutate(proportion = total_minutes / sum(total_minutes)) |>

ggplot(aes(x = wave_num, y = proportion, fill = platform)) +

geom_area(alpha = 0.7, position = "stack") +

scale_fill_manual(

values = c(

"Nintendo" = colors$nintendo,

"Xbox" = colors$xbox,

"Steam" = colors$steam

)

) +

labs(x = "Study Day", y = "Proportion of Play", fill = "Platform") +

scale_x_continuous(breaks = seq(0, 30, 5)) +

scale_y_continuous(labels = scales::percent_format()) +

theme(legend.position = "top")

# Combine panels: top row with 3 panels, bottom row with full-width panel

((panel_a | panel_b | panel_c) / panel_d) +

plot_annotation(tag_levels = "A") &

theme(

plot.background = element_rect(fill = "white", color = NA),

panel.background = element_rect(fill = "white", color = NA)

)

```

:::

### Measures

```{r}

#| label: psychometrics

#| code-summary: "Show code (Calculate reliability for all scales)"

#| output: false

omega_global_ns <- number(

omega(

surveys |> select(bpnsfs_1, bpnsfs_3, bpnsfs_5),

plot = FALSE

)$omega.tot,

.01

)

omega_global_nf <- number(

omega(

surveys |> select(bpnsfs_2, bpnsfs_4, bpnsfs_6),

plot = FALSE

)$omega.tot,

.01

)

omega_game_ns <- number(

omega(

surveys |> select(bangs_1, bangs_3, bangs_5),

plot = FALSE

)$omega.tot,

.01

)

omega_game_nf <- number(

omega(

surveys |> select(bangs_2, bangs_4, bangs_6),

plot = FALSE

)$omega.tot,

.01

)

# proportion of surveys with subsequent play

prop_subsequent_play <- number(

nrow(surveys[surveys$telemetry_played_any_24h, ]) / nrow(surveys) * 100,

.1

)

minutes_subsequent_play <- number(

mean(surveys$play_minutes_24h, na.rm = TRUE),

1

)

sd_minutes_subsequent_play <- number(

sd(surveys$play_minutes_24h, na.rm = TRUE),

1

)

# total hours per platform during 30-day study period

platform_hours <- daily_telemetry |>

mutate(day_local_date = as.Date(day_local)) |>

inner_join(

surveys |>

select(pid, date, wave) |>

distinct() |>

mutate(

date_only = as.Date(date),

wave_num = as.numeric(as.character(wave))

) |>

filter(wave_num <= 30),

by = c("pid", "day_local_date" = "date_only"),

relationship = "many-to-many"

) |>

group_by(platform) |>

summarise(total_hours = sum(minutes, na.rm = TRUE) / 60, .groups = "drop")

hours_nintendo <- number(

platform_hours |> filter(platform == "Nintendo") |> pull(total_hours),

1,

big.mark = ","

)

hours_xbox <- number(

platform_hours |> filter(platform == "Xbox") |> pull(total_hours),

1,

big.mark = ","

)

hours_steam <- number(

platform_hours |> filter(platform == "Steam") |> pull(total_hours),

1,

big.mark = ","

)

```

The measures used in this paper are visualized in @fig-descriptives and detailed below. For other measures in the Open Play study not used here, we refer readers to the Open Play codebook [@BallouEtAl2025Open].

*Need satisfaction in games*. We measured need satisfaction and frustration in games during the most recent gaming session using 3 items from an abbreviated version of the Basic Psychological Need Satisfaction in Gaming Scale [BANGS, @BallouDeterding2024Basic]. The BANGS assesses autonomy, competence, and relatedness need satisfaction with three items for each need; we selected the highest-loading item for each need for our brief daily measure (e.g., relatedness satisfaction: "I felt I formed relationships with other players and/or characters."). Participants rated each item on a Likert scale from 1 (Strongly disagree) to 7 (Strongly agree). We calculated mean scores for need satisfaction by averaging all three items. Reliability of the composite 3-item need satisfaction index was assessed using McDonald’s omega total ($\omega_t$ = `r omega_game_ns` for need satisfaction, $\omega_t$ `r omega_game_nf` for need frustration). As needs are conceptually distinct, we expect lower reliability for the composite index than for unidimensional scales.

*Need satisfaction and frustration in daily life*. We measured need satisfaction and frustration in daily life ("global need satisfaction and frustration") during the previous 24 hours using the brief version of the Basic Psychological Need Satisfaction and Frustration Scale [@ChenEtAl2015Basic; @MartelaRyan2024Assessing]. This scale includes 6 items, with one item for need satisfaction and one item for need frustration for each of the three needs (e.g., "Today ... I was able to do things that I really want and value in life"). Participants rated each item on a Likert scale from 1 (Strongly disagree) to 7 (Strongly agree). We calculated mean scores for need satisfaction and need frustration by averaging the relevant items. Reliability of the composite 3-item need satisfaction index was assessed using McDonald’s omega total ($\omega_t$ = `r omega_global_ns` for need satisfaction; $\omega_t$ = `r omega_global_nf` for need frustration). As needs are conceptually distinct, we expect lower reliability for this composite index than for unidimensional scales.

*Video game playtime*. We measured video game playtime using digital trace data collected from participants' gaming accounts on Xbox, Steam, and Nintendo Switch. During the 30-day study period, we recorded a total of `r hours_nintendo` hours of play on Nintendo Switch, `r hours_xbox` hours on Xbox, and `r hours_steam` hours on Steam. For each daily survey, we calculated total minutes played in the 24-hour period following survey completion. We also created a binary variable indicating whether any play occurred during this period. On average, `r prop_subsequent_play`% of surveys were followed by any play in the subsequent 24 hours, with a mean of `r minutes_subsequent_play` minutes (SD = `r sd_minutes_subsequent_play`).

*Displacement*: We measured displacement of core life domains via an open text field asking participants about the hypothetical alternative activity: "Think back to your most recent gaming session. If you hadn't played a game, what would you most likely have done instead?" With LLM assistance from Qwen3-4b-instruct [@qwen3technicalreport], we coded participant responses based on whether they mentioned any of the following activities: work/school responsibilities, social engagements, sleep/eating/fitness, or caretaking---so-called "core life domains". We refined the LLM prompt through 5 iterations of manual verification of the 100 random coded responses. We created a boolean variable indicating whether any core life domain was hypothetically displaced. Full materials are provided in the supplement, including the exact LLM prompt used for classification, all raw participant responses, coded outputs, manual verification results, and reproducible code.

### Data Exclusions

Consistent with Stage 1, we applied preregistered exclusions to telemetry records prior to analysis: we excluded telemetry days with >16 hours total logged play across linked platforms, sessions >8 hours, and sessions logged in the future (indicating logging error or system-clock manipulation). We also excluded survey responses failing the preregistered attention check (duplicate need item responses differing by >1 scale point). As part of preregistered data quality processing, we removed background sessions and verified there were no overlapping sessions after preprocessing, before proceeding to the planned analyses. No additional exclusion criteria beyond those preregistered were introduced.

### Imputation

We used multiple imputation by chained equations (MICE) with predictive mean matching (PMM) to handle missing data. Missing data were imputed for daily need satisfaction/frustration items (BPNSFS, BANGS) and displacement measures (an average of `r overall_miss_pct`% missing cells across `r pct_ppl_with_missing`% of participants with at least one wave of missing data). Digital trace data were not imputed because tracking was continuous and automatic; within-platform, missing data represent recorded absence of play.

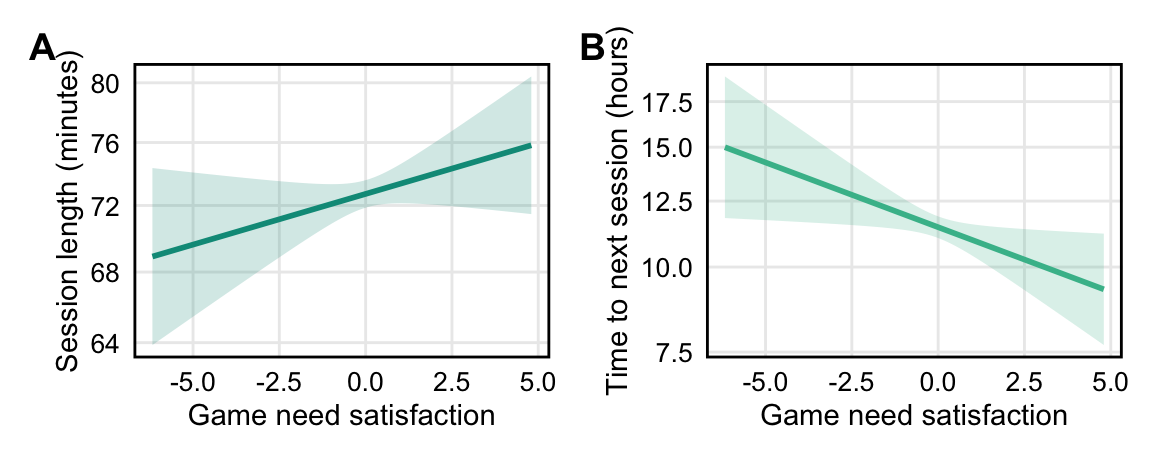

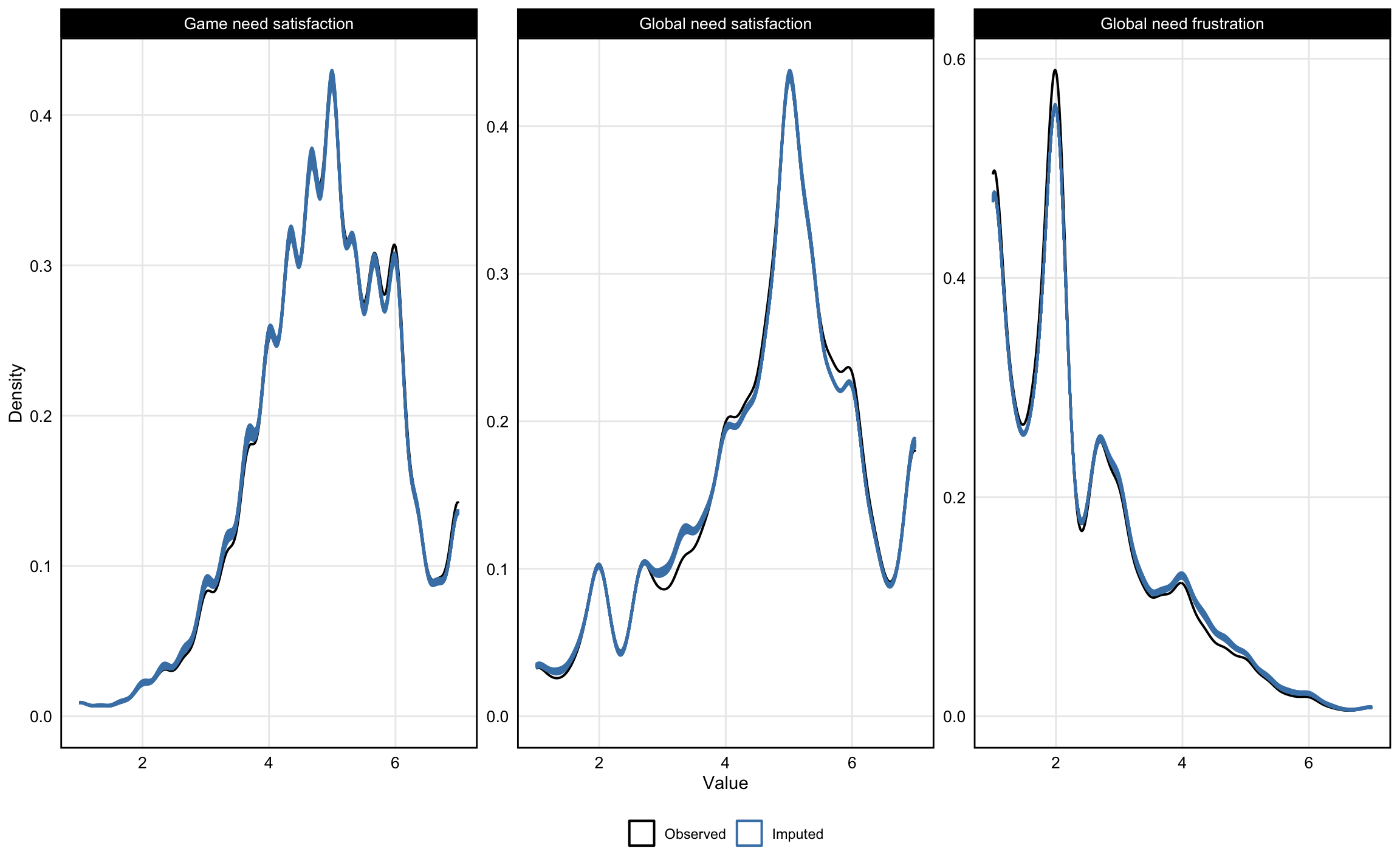

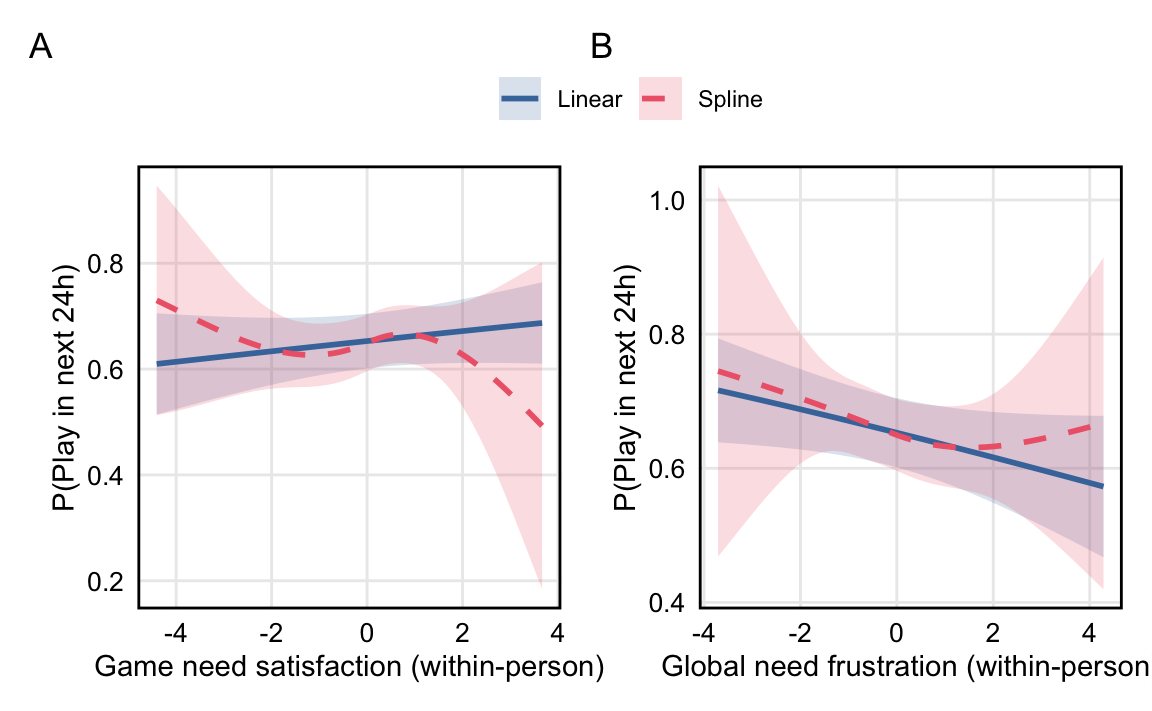

Following the two-stage protocol from @VonHippel2020How based on fraction of missing information, we used 27 imputations. For all variables, imputed distributions closely overlapped with the observed data (see Appendix @fig-imputation). Models were fit separately to each imputed dataset, and estimates were pooled across imputations using Rubin's rules as implemented in the `mice` package [@vanBuurenGroothuis-Oudshoorn2011mice]. For comparison, complete case analyses are reported in the Appendix (Sensitivity Analysis 4); results do not meaningfully differ from the imputed analyses.

### Deviations from Preregistration

We made several deviations from our preregistration to ensure we could recruit enough high-quality participants to meet our sample size goals. In our view, none are so severe enough to threaten the validity of the study. Deviations are summarized in @tbl-deviations.

::: {.place arguments='top, scope: "parent", float: true'}

```{r}

#| label: tbl-deviations

#| code-summary: "Show code (Create table of preregistration deviations)"

#| tbl-cap: "Summary of deviations from preregistration"

tibble(

`Study Aspect` = c(

"Data collection",

"Data collection",

"Data collection",

"Data collection",

"Data collection",

"Data collection",

"Analysis"

),

Preregistered = c(

"All participants sourced from PureProfile",

"Screening sample would be nationally representative by ethnicity and gender",

"Sample consists of participants aged 18--30",

"To qualify, >=75% of a participant's total gaming must take place on platforms included in the study (Xbox, Steam, Nintendo Switch)",

"Qualification contingent upon valid digital trace data within last 7 days",

"Daily surveys sent at 7pm local time",

"Data would be imputed for all participants given a 50% overall response rate"

),

Actual = c(

"Participants sourced from both PureProfile and Prolific",

"Approximately 50% of screening was done using quotas for national representativeness by ethnicity and gender; all subsequent sampling used convenience sampling with no quotas",

"Sample consists of participants aged 18-40",

"To qualify, >=50% of a participant's total gaming must take place on platforms included in the study (Xbox, Steam, Nintendo Switch)",

"Qualification contingent upon valid digital trace data within last 14 days",

"Daily surveys sent at 2pm local time",

"Data imputed for only participants with an individual 50% response rate"

),

`Justification for Deviation` = c(

"Exhausted PureProfile participant pool before reaching required sample size",

"Exhausted participant pools of smaller demographic categories on both Prolific and PureProfile before reaching required sample size",

"(1) Unable to recruit enough participants in the US aged 18--30",

"Low rates of study qualification at 75% threshold, in large part due to substantial uncaptured Playstation play",

"Feedback from participants indicating that play during a 7-day period was subject to too many fluctuations (e.g., a busy workweek)",

"Feedback from participants indicating that evening plans often interfered with survey completion and thus adversely affected response rate",

"Imputing values for participants with 50--97% missing data is poorly justified; results from the preregistered analysis with imputation for all participants are reported in the Appendix (Sensitivity Analysis 5) and do not meaningfully differ from the main analysis"

)

) |>

tt() |>

style_tt(fontsize = 0.7) |>

style_tt(i = 0, bold = TRUE, align = "c")

```

:::

### Positive Controls

```{r}

#| label: positive-controls

#| code-summary: "Show code (Test positive controls)"

# Use first imputation for positive control check

cor_playtime <- cor.test(

dat$self_reported_weekly_play[dat$.imp == 1],

dat$play_minutes_24h[dat$.imp == 1],

method = "pearson"

)

cor_playtime_inline <- glue(

"r = {number(cor_playtime$estimate, .01)}, 95% CI [{number(cor_playtime$conf.int[1], .01)}, {number(cor_playtime$conf.int[2], .01)}]"

)

```

As specified in the preregistration, we assessed two positive controls designed to assess whether our data were capable of addressing our stated hypotheses. Both passed: self-reported playtime correlated positively with logged playtime (`r cor_playtime_inline`); and after preprocessing (e.g., to remove background sessions), there were no overlapping sessions. We therefore proceeded with the planned analyses.

### Analysis Approach

We tested each hypothesis using multilevel within-between regression models estimated with `glmmTMB` [@BrooksEtAl2017glmmTMB]. Focal predictors were within-person mean-centered, with 30-day person means included to separate day-level from 30-day aggregate associations. All models included random intercepts and AR(1) autocorrelation structures; H1 and H3 additionally included random slopes for day-level predictors, while H2 used random intercepts only due to convergence issues with the binary outcome. No demographic covariates were included, as the within-between specification controls for all time-invariant individual differences.

This approach isolates day-to-day dynamics: day-level coefficients estimate how much a person's outcome deviates from their own 30-day average when their predictor deviates from their own 30-day average. For example, H1's day-level coefficient answers: 'On days when individuals experience better game need satisfaction than their typical level, do they report higher global need satisfaction than their typical level?' The non-focal 30-day aggregate coefficients capture whether people with generally higher predictor levels also have generally higher outcomes across the study period. Random effects account for stable individual differences (e.g., personality, gaming preferences), and the AR(1) term models temporal autocorrelation in daily data. This specification does not adjust for time-varying confounds; estimates should be interpreted as associations rather than causal effects.

## Study 1 Results: Confirmatory

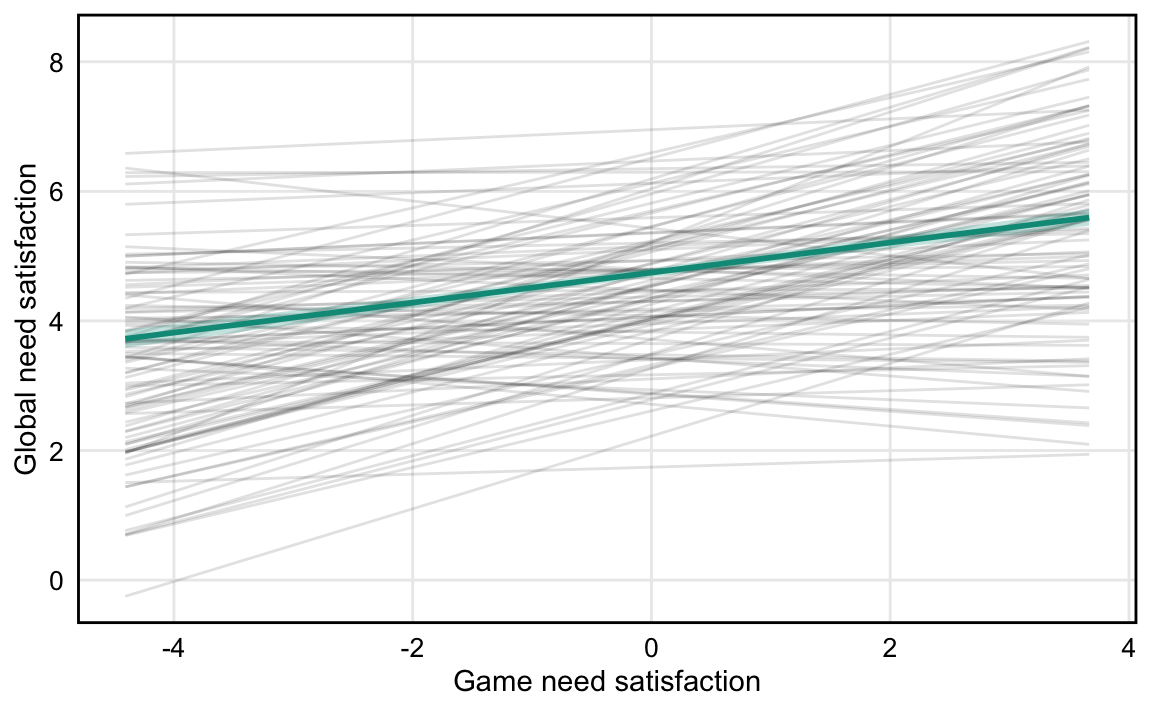

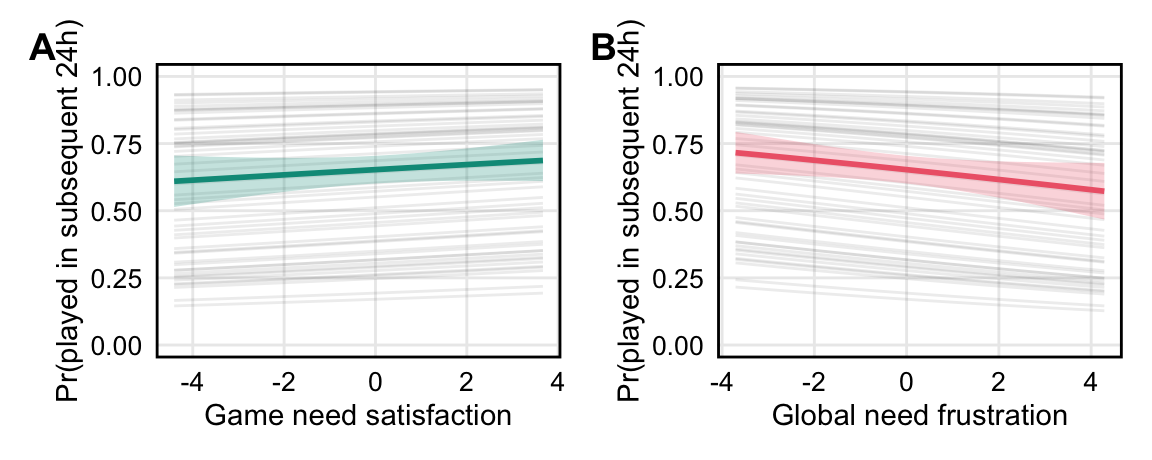

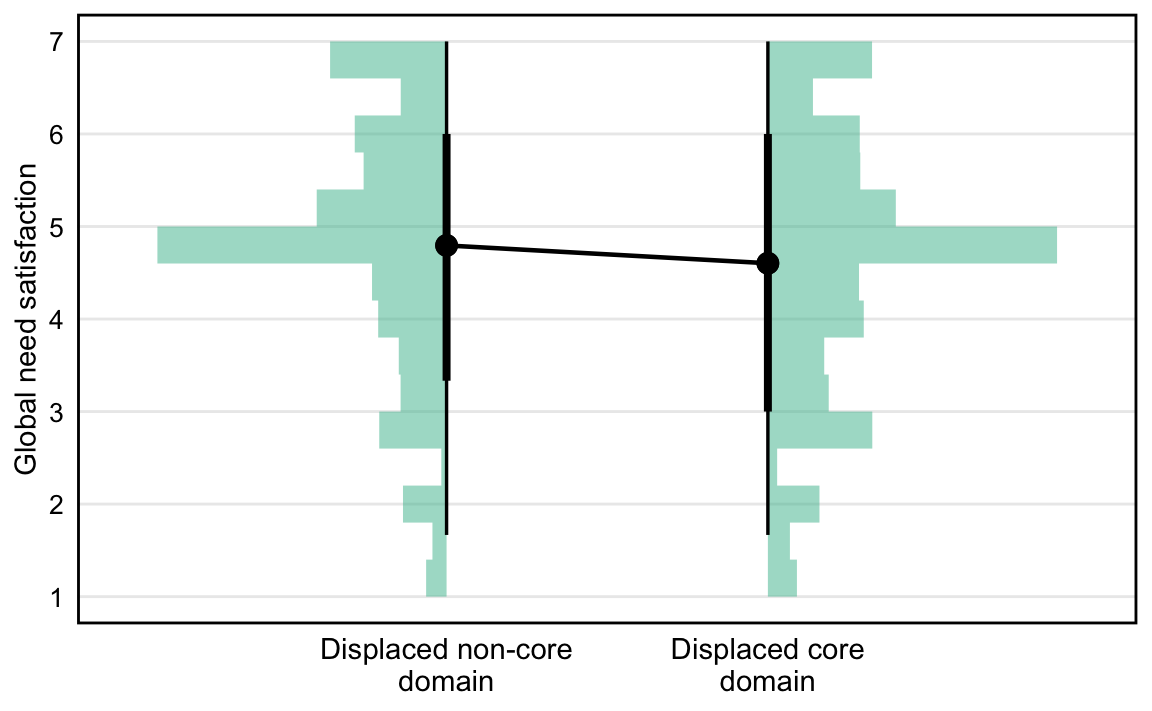

We present visualizations for each hypothesis test in turn; all key estimates are summarized @tbl-main-results.

### H1. Greater in-game need satisfaction is associated with greater global need satisfaction

```{r}

#| label: h1-model

#| output: false

#| code-summary: "Show code (Fit H1 models: game NS → global NS)"

# File-based caching to avoid re-running across multiple output formats

h1_cache_file <- "data/models/h1_fits.rds"

h1_pooled_file <- "data/models/h1_pooled.rds"

if (file.exists(h1_cache_file) && file.exists(h1_pooled_file)) {

message("Loading cached H1 model fits")

h1_fits <- readRDS(h1_cache_file)

h1_pooled <- readRDS(h1_pooled_file)

h1mod <- h1_fits[[1]]

} else {

message("Fitting H1 models...")

# Fit model to each imputed dataset

h1_fits <- lapply(1:m_imputations, function(i) {

message(glue("Fitting H1 model to imputation {i} of {m_imputations}"))

dat_i <- dat |> filter(.imp == i)

glmmTMB(

global_ns ~ game_ns_cw +

game_ns_cb +

(1 + game_ns_cw | pid) +

ar1(wave + 0 | pid),

data = dat_i

)

})

# Pool estimates using Rubin's rules

h1_pooled <- pool(h1_fits)